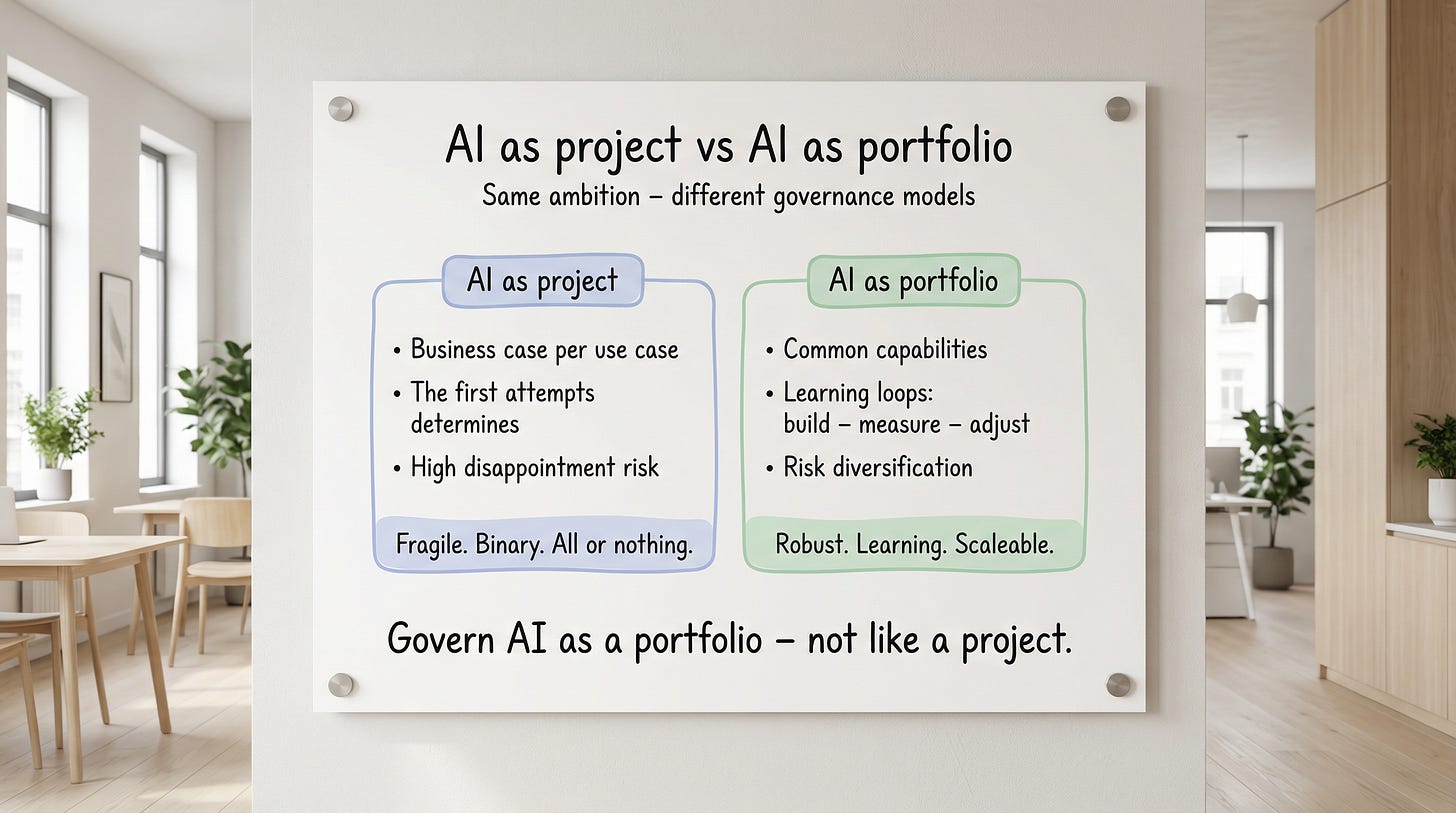

Stop running AI as projects—start running AI as an investment portfolio

If individual AI initiatives don’t meet expectations, it’s all too easy to conclude that “AI doesn’t work for us.” But most of the time, the problem is the governance model.

Many organizations start with AI like this: pick a promising use case, write a business case, present it to leadership, run a pilot, realize the expectations weren’t met—and then question the entire AI effort.

But AI doesn’t behave like “regular” projects. Outcomes for individual bets are often uncertain and the value is unevenly distributed. On top of that, most of the value sits in what happens around the technology: changed ways of working, better decisions, and new business opportunities.

You can see it in the numbers:

PwC’s 2026 Global CEO Survey: only 12% of CEOs say AI has so far delivered both cost and revenue benefits, and 56% still see no significant financial benefit.

BCG’s 2025 study (over 1,250 companies): only 5% create AI value “at scale,” while 60% see no material value at all despite investments.

IDC/Lenovo study (cited by CIO): 88% of AI pilots don’t reach broad deployment. For every 33 pilots, only 4 make the jump to production.

If you run AI like traditional projects, the result is often a pilot graveyard. The first pilots become a “trial”—and if they don’t scale, the trial also becomes the verdict: “The AI initiative was a bad idea.”

AI advisor and author Tobias Zwingmann captures exactly this pattern in his article Why AI Roadmaps beat AI projects. He describes how getting the first budget usually isn’t the hard part—but the initiative can die when the first prototype doesn’t deliver what people hoped. His reasoning aligns closely with my own experience and is an important starting point for this article.

The solution is to change the governance model: AI needs to be run like an investment portfolio, with multiple initiatives, shared foundational capabilities, and a roadmap where shut-down initiatives become lessons rather than failures.

When the first pilot becomes the verdict

The pattern is often predictable.

An idea shows potential. Many people see the possibilities. Then reality hits: data access, integration, information security, legal constraints, operations/governance, training, and process change.

When this gets difficult, it’s easy to mistake friction for final proof. That’s when having the right governance model becomes critical.

If leadership starts asking, “Should we really continue?” the initiative has often already lost momentum. Not because AI lacks potential, but because you built a governance model where too much depended on the first attempt.

Eventually, the organization might buy Copilot licenses and feel like that’s “enough” AI.

Why AI differs from IT

In much of IT (utility IT), you get impact by implementing a technical solution and rolling it out. It’s not always easy—but it’s often fairly planable.

AI, on the other hand, works more like business transformation. Value emerges when everyday behaviors change, when decisions improve, and when workflows are redesigned.

That means entirely different factors become decisive:

data quality and concepts/definitions

organizational receptiveness

learning capacity

entrepreneurship and product capability (getting things into production and actually used)

clear ownership over time

This is also why “we ran a pilot” is rarely the same as “we’ve built the capability.”

Think like a venture capitalist and build a learning loop

Venture capitalists don’t invest in a single company and hope it carries everything. They spread risk and give themselves multiple chances.

A simplified but useful mental model is: out of ten investments, most fail, a few do okay, and one becomes the rocket that pays for the whole thing—with a strong margin.

AI efforts are often similar. Outcomes are uncertain, and the biggest wins rarely come from the first attempt. They come from a sequence of initiatives where each step makes the next step better.

That’s why AI should be seen as an investment portfolio with a learning loop: build, measure, adjust—without getting stuck because of individual setbacks.

Uncertainty and learning-loop thinking aren’t equally easy in all organizations, though. In an organization with a strong Operational Excellence culture, uncertainty can easily trigger more control: more councils, more review cycles, and more “safe” wording. The effect is slow progress—or getting stuck entirely. To pick up speed, you often need to borrow behaviors from other organizational cultures built for faster learning loops, pace, and decision-making authority.

Pilot success is not the same as scalable value

A pilot often answers the question: “Can it work?” Production answers the question: “Can it work every day, with real users, real data, and real risk?”

Some typical gaps:

Data: the pilot is built on a subset; reality is full of exceptions and unclear definitions.

Integration: it takes time to connect inputs/outputs, permissions, logging, and workflows.

Ownership: when the project team moves on, it becomes unclear who owns the day-to-day results.

Actual usage: if ways of working and behaviors don’t change, the value is zero—even if the tech is good.

This is also why a “successful pilot” can sometimes be dangerous: it can create expectations without building the prerequisites for scaling.

Combination effects: the whole beats any single project

Project logic becomes even more misleading because AI initiatives affect one another.

An initiative that improves data quality, concept models, and access can feel “too expensive” to justify through a single use case—especially if you require every initiative to carry its full foundational cost on its own.

But if several future initiatives reuse the same foundational work, the business case changes completely. Then the portfolio should carry the investment—not the first pilot.

This is also where many miss the point: in a portfolio, “Project A” can be what makes “Project B” possible and “Project C” profitable. Trying to price everything in isolation leads you to reject the very building blocks that make scale possible.

The portfolio method: from ideas to a roadmap in four steps

Here’s a concrete, repeatable approach:

Set a threshold. Decide and quantify the minimum benefit that’s worth pursuing. Without a threshold, everything is important—and then nothing gets prioritized.

Map bottlenecks without talking about AI. Where is time, quality, or money being wasted in your core flows? Talk to the people who do the work—not just the people who report on the work.

Filter against the threshold. Which problems are big enough to belong on the roadmap—i.e., worth spending capacity, risk-management effort, and change muscle on?

Prioritize feasibility first. Once the benefit is already “good enough,” the question becomes: what can we get into production fastest, with reasonable risk and clear ownership?

Mini example: “Customer service has 18 recurring case types → 5 are large enough to pass the value threshold → start with 2 where the data is already well structured.”

Execution: key principles

Portfolio governance without execution is just a list. Here are principles that help the portfolio survive—and start delivering.

Treat AI as a product, not a project. Assign a lifecycle owner. Decide who is responsible for day-to-day outcomes—not just delivery. That’s the difference between “a pilot we did” and “a capability we use.”

Actual usage beats model accuracy. Ask early: do people use this when no one is watching? Have behaviors changed? Would anyone complain if you turned it off for a day? If the answer is no, the value is almost always zero, even if the model is impressive.

Build the boring capabilities early. They don’t sound exciting, but they’re the difference between a demo and production:

data definitions and quality

access and traceability

ownership (business + tech)

monitoring and feedback loops

risk, security, and privacy

The point: these investments shouldn’t be “forced into” every single initiative. They should be built as shared assets for the portfolio.

Run multiple initiatives—but with stop criteria. Portfolio doesn’t mean “everything at once.” It means thoughtful prioritization, where you know what’s required to continue, pause, or stop. The key is that stopping becomes learning, not shame. Otherwise, the organization stops trying.

Conclusion

Project logic makes AI fragile. Portfolio logic makes AI robust.

Portfolio thinking does two things at once. It reduces the risk that the first pilot becomes the verdict. And it makes it rational to invest in the shared foundational capabilities that enable scaling.

It also often requires a cultural shift: from “we must be certain before we do” to “we must learn in a controlled way while we do.”

Note! The content on this blog reflects my personal opinions and does not represent my employer. As the publisher, I am not responsible for the comments section. Each commenter is responsible for their own posts.