Why AI Gets Stuck in Some Organizations

AI rarely fails for technical reasons. It fails when it collides with a culture that’s optimized for stability rather than learning.

I’m too often meeting organizations that say they’re “investing in AI” — but where the investment, in practice, consists of PowerPoints, policies, and proofs of concept. When it’s time to use AI for real, everything grinds to a halt: alignment across multiple layers, overly cautious legal interpretations, and a risk‑minimization mindset of “better to refrain than risk doing something wrong.” The result is paper products and “organizational complication” instead of organizational development.

At the same time, there are organizations that move fast. They test, learn, change how they work, and realize benefits early. So why are some fast and others slow?

I think a big part of the answer is cultural — and that it’s connected to organizations’ value disciplines.

Three value disciplines (and an important trade‑off)

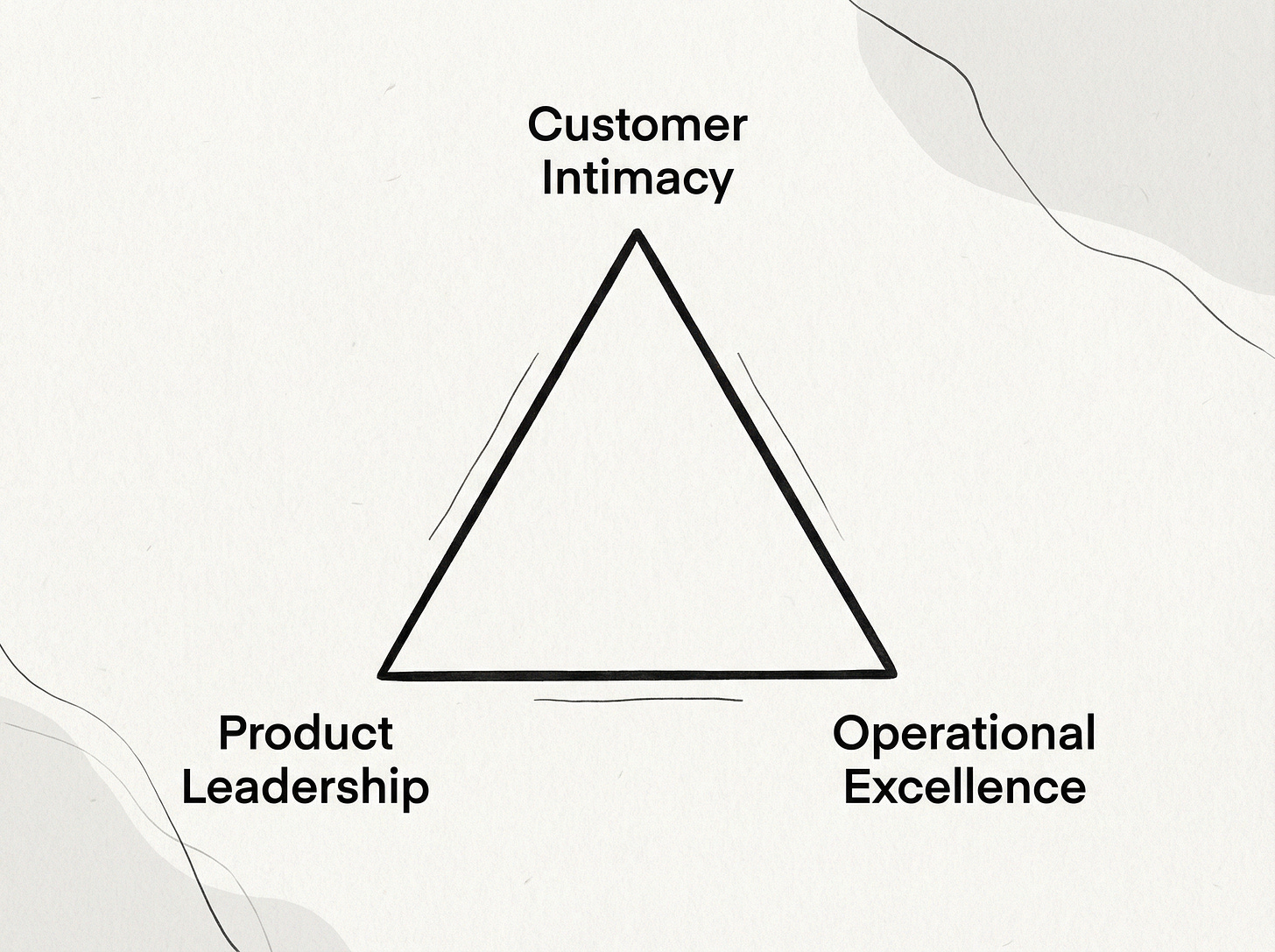

Michael Treacy and Fred Wiersema popularized in the 1990s the idea of three value disciplines: Customer intimacy, Product leadership, and Operational excellence.

The core of the model is that if you want to be truly strong in one discipline, it requires sacrifices in the others. You have to prioritize.

It can sound like strategy. In practice, it’s culture: which behaviors are rewarded, which are punished, and what the organization reflexively does when uncertainty arises. What gets rewarded becomes the norm, the norm becomes culture, and culture gets baked into the walls - even when the outside world shifts.

The value disciplines

1) Customer intimacy: staying closest to reality

The promise: “We understand you better than anyone else and adapt to you.”

Customer intimacy is built on accepting variation. Two customers/users don’t always get the same solution — that’s the whole point. You invest in relationship‑building, advisory support, tailoring, onboarding, support, and continuous improvement based on real usage.

Strengths: responsiveness, relevance, trust, high impact from what you do.

You sacrifice: pure efficiency and standardization. Tailoring costs money and creates complexity.

2) Product leadership: being fastest with what’s new

The promise: “We lead the way. You get the best, first.”

Product leadership isn’t about having everything perfectly “tidy” in every detail. It’s about the ability to learn quickly. You build the capability to rapidly test hypotheses, measure and evaluate, make decisions under uncertainty, and iterate. Failures are seen as data — not shame — as long as they’re controlled and lead to learning.

Strengths: speed, innovation pace, the ability to pivot quickly when reality changes.

You sacrifice: stability, predictability, and sometimes short‑term efficiency. Innovation is “waste” — until it works. And that’s the point.

3) Operational excellence: being the most reliable and efficient

The promise: “It works. Every time. Cheap, safe, and with consistent quality.”

Operational excellence is built on minimizing variation. If you can do the same thing the same way a thousand times, you get lower cost, fewer errors, and more consistent quality. The organization becomes robust: operations are king, deviations are handled systematically, and change happens in a controlled manner.

Strengths: quality, stability, compliance, scalable delivery.

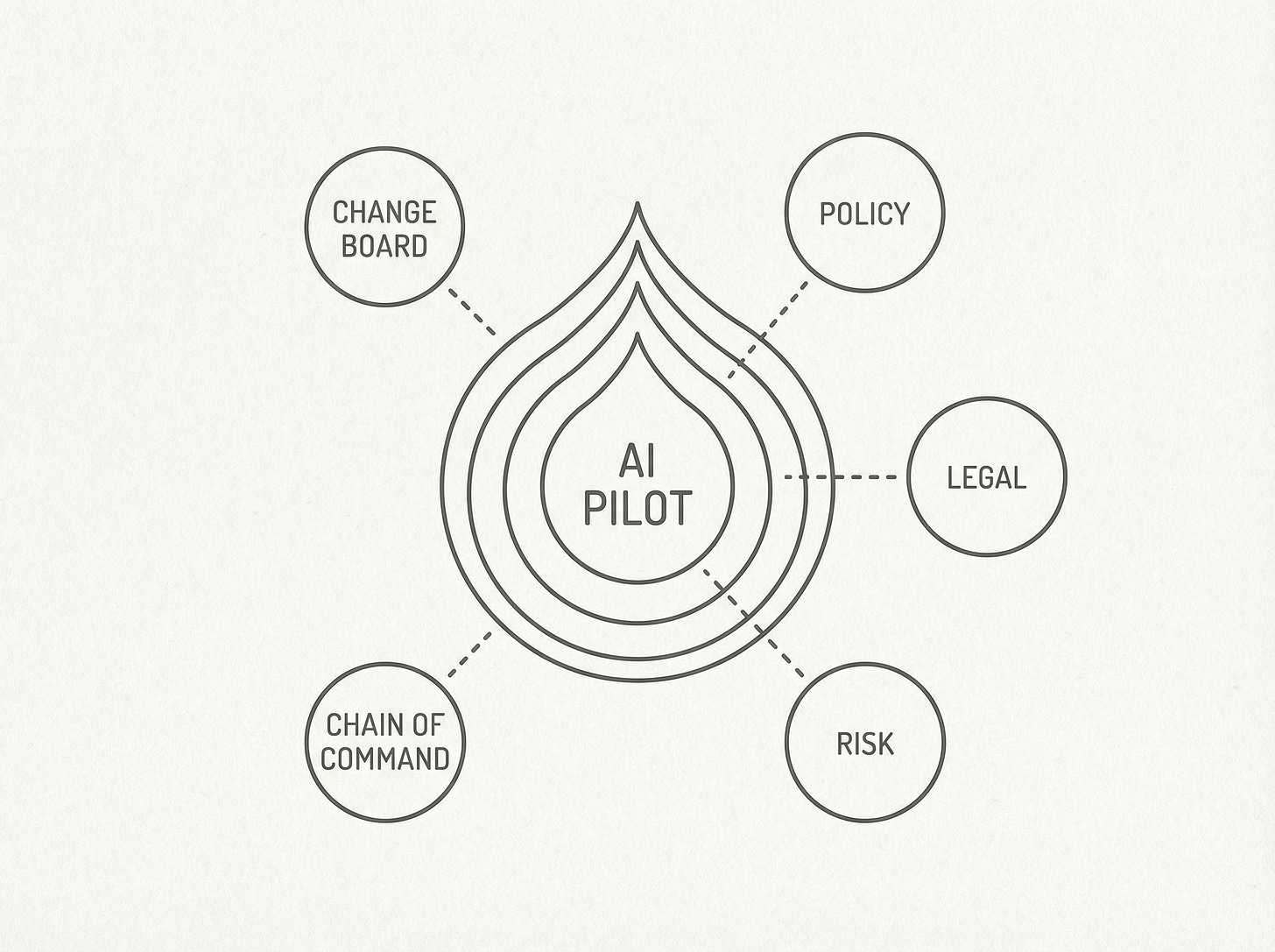

You sacrifice: flexibility and pace of change. To be truly great at operational excellence, you need governance mechanisms: change boards, controls, processes, and solid documentation. All of that is rational — but it has a side effect: change moves slowly.

Why AI “likes” two value disciplines — and clashes with the third

Adopting AI is, at its core, a change management issue. You don’t become “AI‑driven” by rolling out a tool. You become AI‑driven when ways of working, decisions, and everyday behaviors change.

It’s also made harder by the fact that requirements only become clear once you try, results can be difficult to predict, and risks have to be understood in practice. AI is rarely “install and done.”

That’s why it often moves faster in cultures that are already built for rapid learning loops (Product leadership) or that have high adaptability and a strong focus on user value (Customer intimacy).

And that’s why Operational excellence often has the toughest time: what makes operational excellence strong in day‑to‑day operations makes it slow in change and organizational development.

Operational excellence tends to turn uncertainty into an argument for more alignment: more stakeholders, more councils, more consultation rounds, more “safe” wording.

The paradox is: the newer and more uncertain something is (like AI), the more governance gets triggered — and the organization ends up formally active (policies, guidelines, PowerPoints) but practically passive (ways of working and everyday behaviors don’t change).

AI can provide enormous leverage for operational excellence (automation, decision support, quality assurance). But the path there often collides with the culture’s own reflexes.

Conclusion

It’s important to say this clearly: Operational excellence is not “worse.” It’s often a response to a reality where mistakes are expensive — especially in banks, universities, healthcare, government agencies, and other critical public services. Their mission isn’t to be first; it’s to be reliable.

Treacy and Wiersema make an important point about value disciplines: you can only prioritize one value discipline, but you also need to become good enough at the others. In practice, that means an operational‑excellence organization that wants to realize AI benefits needs to borrow a bit of behavior from the other disciplines: a dose of product leadership (learning loops, speed, mandate) and a dose of customer intimacy (adapting for customers/users and real needs).

And that doesn’t happen by itself. It requires space, courage, and mandate from the very top — and that you ask this question:

What value discipline do we have — and how do we run our AI initiative so it can make its way through our own culture without getting stuck in it?

Note! The content on this blog reflects my personal opinions and does not represent my employer. As the publisher, I am not responsible for the comments section. Each commenter is responsible for their own posts.